This is my first post featuring the Racket language. At some point we may start evangelizing and looking down our ...

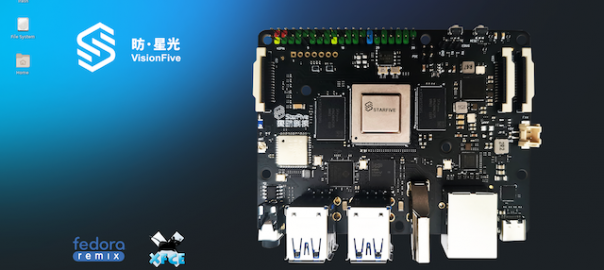

Getting Started with StarFive VisionFiveGetting Started with StarFive VisionFive

I’ve been excited to get my hands on a StarFive VisionFive and it finally arrived last week after being on ...

swiftformat, Decent Swift Syntax, and Sublime Textswiftformat, Decent Swift Syntax, and Sublime Text

If you’re writing Swift code for iOS you’re most likely going to be doing so in Xcode. If you’re coding ...

Swift on Linux In 2021Swift on Linux In 2021

It has been nearly 6 years since Apple first open sourced the Swift language and brought it to Linux. In ...

Yes You Can Run Homebrew on an M1 MacYes You Can Run Homebrew on an M1 Mac

One of the reasons I took the plunge and bought an M1-based Mac is to test out its performance and ...

Detecting macOS Universal BinariesDetecting macOS Universal Binaries

Apple has transitioned from different instruction set architectures several times now throughout its history. First, from 680×0 to PowerPC, then ...

macOS Bundle Install and OpenSSL GemmacOS Bundle Install and OpenSSL Gem

From time to time you run into an issue that requires no end of Googling to sort through. That was ...

Migrating Your Nameservers from GoDaddy to AWS Route 53Migrating Your Nameservers from GoDaddy to AWS Route 53

I can already hear the rabble shouting, “Why would you use GoDaddy as your domain registrar?!” A fair question, but ...

Selecting and Prioritizing Business ProjectsSelecting and Prioritizing Business Projects

First off, this post is not about how to manage your business projects but is instead about how to decide ...

Encrypting Existing S3 BucketsEncrypting Existing S3 Buckets

Utilizing encryption everywhere, particularly in cloud environments, is a solid idea that just makes good sense. AWS S3 makes it ...